Month: April 2019

-

Monthly notes 40

Refactoring, computer science concepts on day job, doing better code reviews, battling CSS and watching cat videos. That’s Monthly notes for April. Not much so enjoy slowly :) Issue 40, 4.2019 Learning Refactoring.GuruRefactoring.Guru makes it easy for you to discover everything you need to know about refactoring, design patterns, SOLID principles and other smart programming…

-

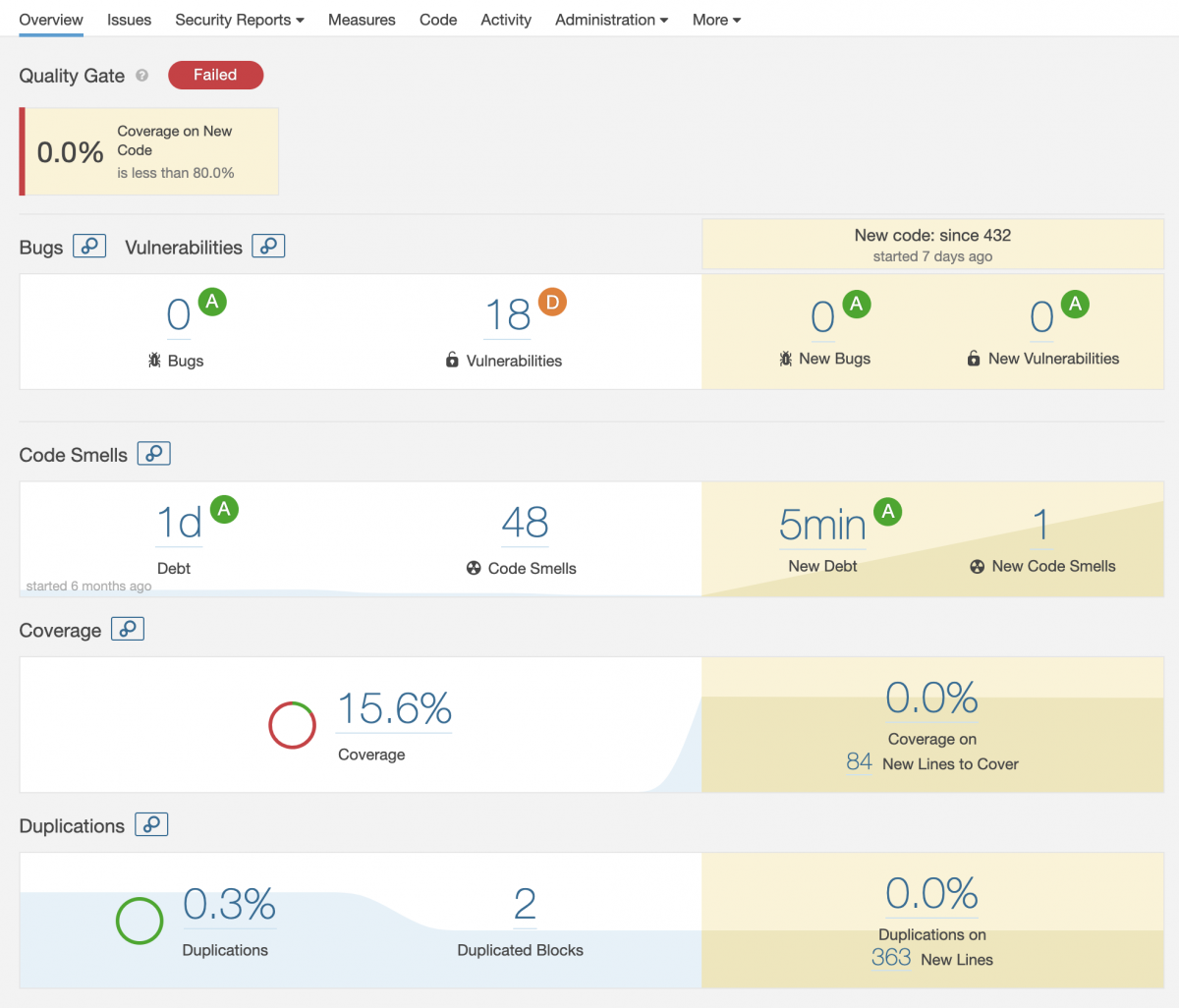

Code quality metrics for Kotlin project on SonarQube

Code quality in software development projects is important and a good metric to follow. Code coverage, technical debt, vulnerabilities in dependencies and conforming to code style rules are couple of things you should follow. There are some de facto tools you can use to visualize things and one of them is SonarQube.… Jatka lukemista →