Month: January 2020

-

Monthly notes 47

Issue 47: 30.1.2020 War Stories #Y2038 problem. “It’s *already here*. Fix your stuff.”In many systems time is represented as number of seconds passed since 00:00:00 UTC on 1 Jan 1970 and stored as signed 32-bit integer. Such implementations can’t encode times after 03:14:07 UTC on 19 January 2038. (from @walokra) Ops Lessons We All Learn…

-

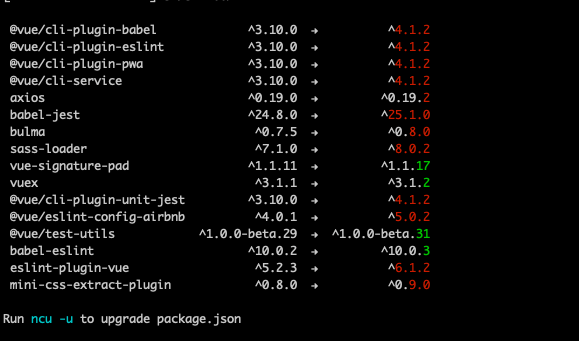

Tracking vulnerabilities and keeping Node.js packages up to date

Software evolves quickly and new versions of libraries are released but how do you keep track of updated dependencies and vulnerable libraries? Managing dependencies has always been somewhat a pain point but an important part of software development as it’s better to be tracking vulnerabilities and running fresh packages than being pwned.… Jatka lukemista →

-

Notes from security in the age of Docker & Kubernetes

Security is always the more obscure part of software development and while container runtimes provide good isolation from the host operating system when using Docker and running containers in Kubernetes, you should not assume to be free from exploits. Remember to use the best practices when you were not using containers.… Jatka lukemista →